Scalable Log Processing System

Designing a high-throughput logging system capable of handling millions of events per day with real-time monitoring and alerting.

Problem

Modern applications generate massive volumes of logs. Traditional systems fail to scale, leading to delayed insights and poor incident response.

- High log volume (1M+ events/day)

- Need real-time processing

- Reliable storage and search

Architecture

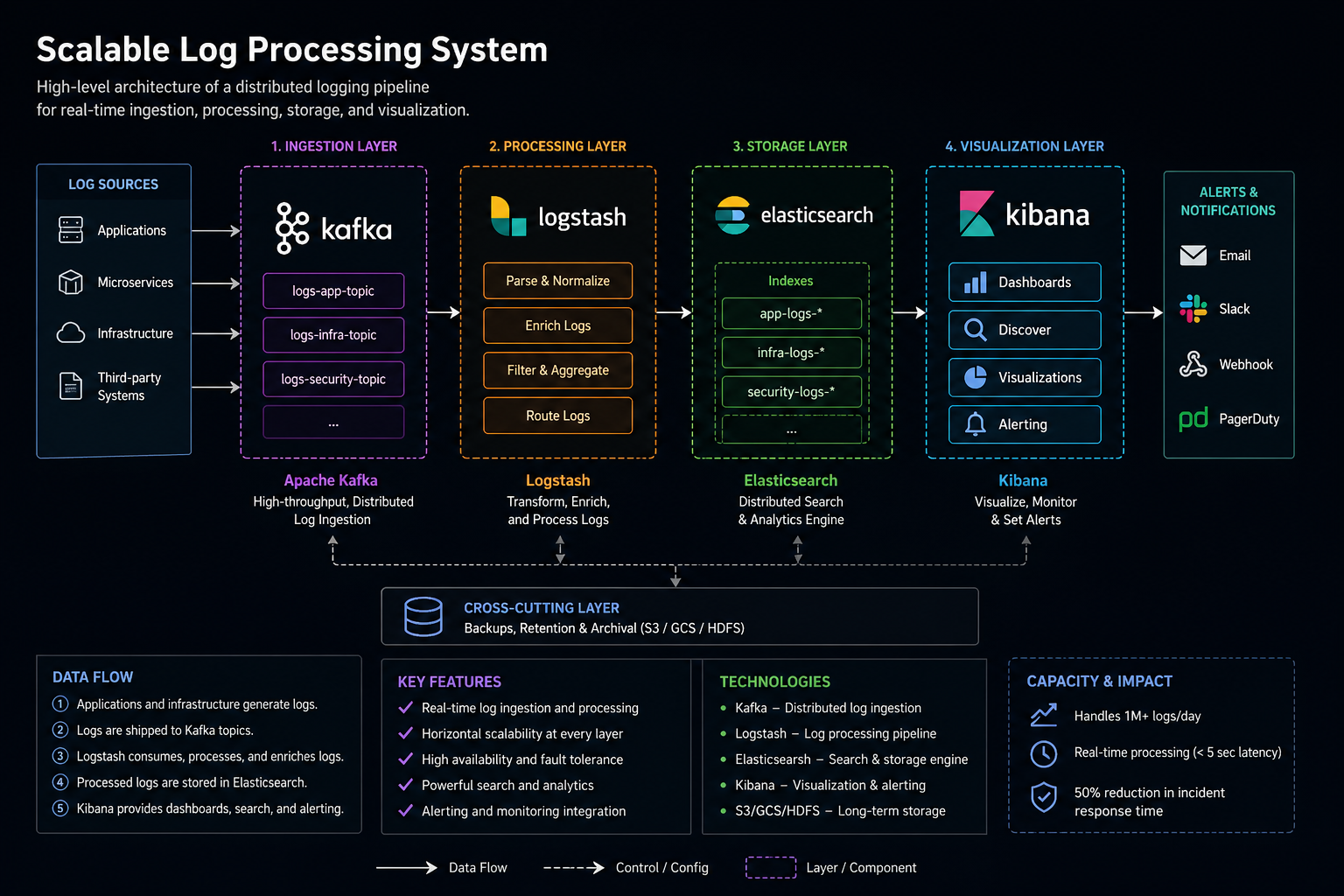

The system is designed using a distributed pipeline to ensure scalability and fault tolerance.

- Producers: Applications generate logs

- Kafka: Handles ingestion and buffering

- Logstash: Processes and transforms logs

- Elasticsearch: Stores and indexes logs

- Kibana: Visualization and monitoring

Architecture Diagram

Kafka → Handles ingestion & buffering

Logstash → Transforms logs

Elasticsearch → Index & search

Kibana → Visualization dashboard

Scaling Strategy

- Kafka partitions for horizontal scaling

- Elasticsearch sharding for distributed storage

- Stateless log processors for auto-scaling

Trade-offs

- Kafka adds complexity but ensures durability

- Elasticsearch is powerful but resource-intensive

- Real-time processing increases infrastructure cost